Most agencies don't have a centralized email management problem until they have an incident. Then they realize they never had control at all. A client domain goes quiet. Inbound invoices stop arriving. The admin can't log in. Everyone says "we changed nothing" — and DNS looks different anyway. The postmortem blame cycle starts, and nobody can answer the one question that matters: who owns this mailbox, and who can reset it?

This is a composite postmortem built from the same root failures that keep surfacing: offboarding gaps, reset abuse, and ownership drift. For the structural control model that prevents this across every client domain you manage, see the operator's guide to customer email management. This article is the incident report.

Centralized email management isn't an IT luxury for agencies — it's what separates a ten-second answer from a three-day blame cycle. Without it, you're already in the incident. You just haven't triggered it yet.

What Centralized Email Management Actually Means

Centralized email management is the practice of consolidating control over all mailboxes, domains, DNS records, and access credentials into a single auditable plane — rather than letting ownership scatter across individual accounts, shared logins, and tribal knowledge. For agencies managing client email infrastructure, it means you can answer four questions instantly: who owns each mailbox, who can reset it, what changed last, and how do you roll it back.

If you can't answer all four, you don't have centralized email management. You have chaos with a working mail server. The incident is a matter of when, not if.

The Incident Timeline: Normal Drift to Crisis

An agency email incident follows a predictable four-phase pattern. Ownership drifts as people leave. Resets happen through the easiest path. Recovery endpoints quietly rot. One operational trigger collapses everything. Most agencies miss the first three phases because mail is still flowing — and that's exactly what makes centralized email management so easy to defer and so expensive when you don't.

Phase 1: Normal drift, normal excuses

A key person leaves. Their mailbox stays active "for continuity." No owner gets assigned. A shared admin login exists — usually an old IT credential — used for onboarding and left as the emergency key. Forwarding rules appear. DNS gets edited on a Friday. SPF grows until it breaks. Nothing explodes, so the behavior gets reinforced.

Phase 2: The environment becomes brittle

Brittle means one more change breaks it. Multiple admins exist, but nobody can list them. The recovery email points at an unmonitored mailbox. The registrar login belongs to a former contractor. MFA is on "for most accounts," except the ones that matter. Mail still flows. That's the trap.

Phase 3: The trigger

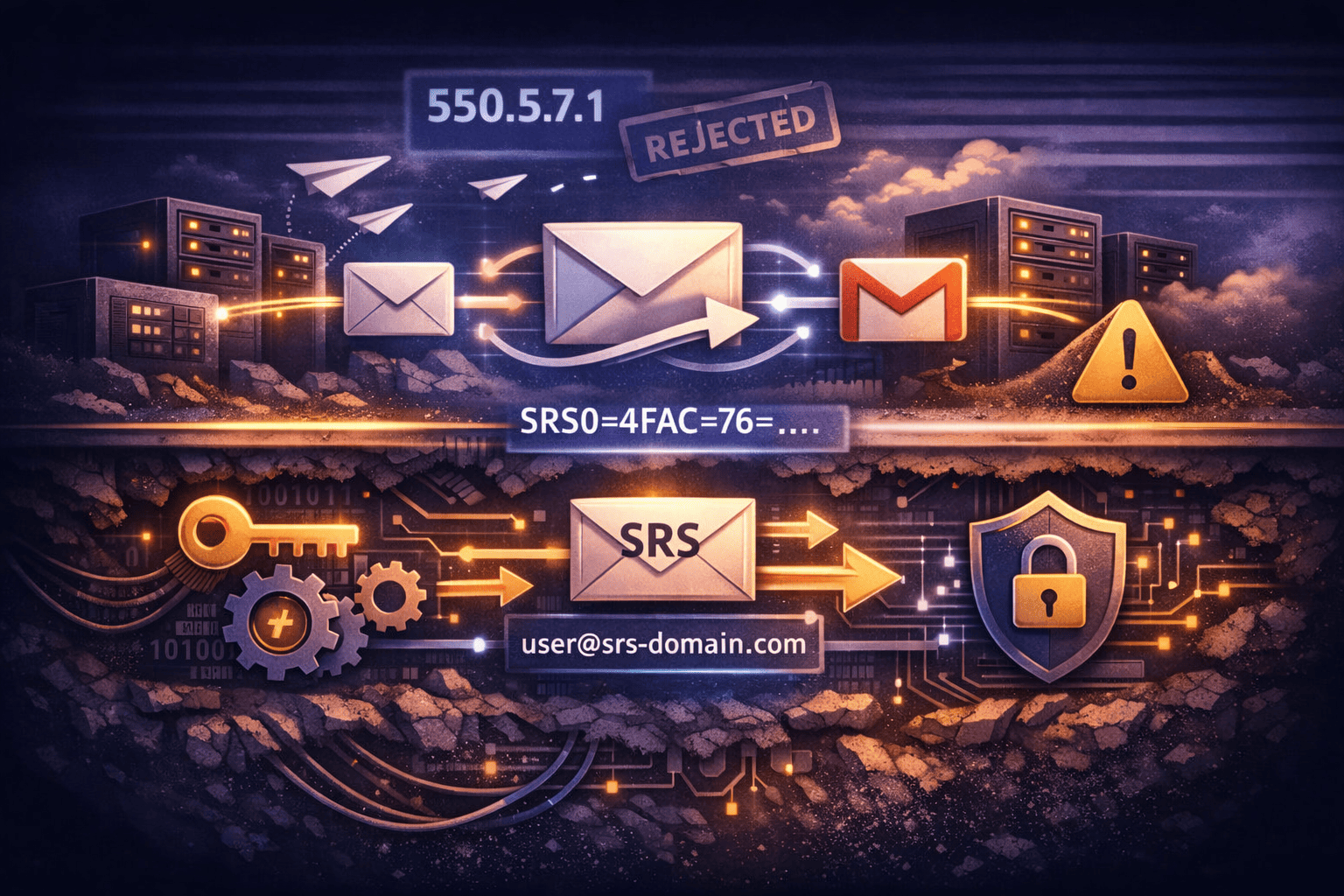

The trigger isn't always a hacker. Often it's operational pressure. A deliverability drop. A billing dispute where a vendor "verifies identity" over the phone. A senior person can't log in and the helpdesk wants to be helpful. If your reset model is weak — if there's no centralized email management layer enforcing who can authorize what — this is where you lose control.

Phase 4: The incident

Common failure modes: reset abuse (someone convinces the service desk to bypass MFA), stale access (a terminated contractor still has a working account), recovery hijack (password resets go to a domain no longer owned), routing persistence (forwarding used as a quiet backdoor).

Symptoms: inbound invoices stop arriving. Outbound mail starts bouncing. The client's admin can't log in. "We changed nothing" — but DNS is different. The client asks one question: who owns this mailbox, and who can reset it? If you can't answer that fast, you're not in control. You're guessing.

Root Cause: Why Agency Email Breaks the Same Way Every Time

The root cause of an agency email incident is structural, not accidental. Ownership isn't explicit, reset authority isn't gated, and recovery is treated like a convenience feature. Without centralized email management, that creates a predictable failure chain: drift → easy reset → persistent access → loss of control. Different org, same mechanics, every time.

Failure 1: Ownership drift

The mailbox that used to belong to "Accounting" now belongs to "whoever inherited the laptop." Or it belongs to nobody — but it's still the recovery endpoint for three production services. Ownership drift creates a hidden authority layer. Nobody votes for it. It just accumulates.

Failure 2: Reset authority creep

The more people who can reset something, the less secure it is. Outsourced helpdesk, MSP techs, agency staff, vendor support — each one is a social-engineering surface. Under pressure, policies get bent. Without a centralized email management policy that explicitly governs reset authority, you're relying on each individual to hold the line when someone's yelling at them.

Failure 3: Recovery endpoints rot

Recovery endpoints are assets. Treat them like production infrastructure. Rot looks like: recovery email goes to an unmonitored mailbox, the domain that receives resets expires, the phone number on file belongs to a former employee. Now your recovery path is a backdoor.

You've seen the public versions: service desk reset abuse where attackers impersonate staff, offboarding gaps where terminated employees retain access long enough to export data, expired-domain reset vectors where password resets land at a re-registered domain. Different org. Same mechanics.

Five Signals You Missed Before the Incident

Signals are the small operational tells that ownership and recovery are drifting. They show up as minor annoyances — until the day they become the incident. The best operators running centralized email management treat these signals like smoke alarms: not proof of fire, but a reason to check the wiring immediately.

Signal 1: The agency knows user passwords

If your agency can log in as the user, you have shared secrets. Shared secrets cause three problems: no accountability (you can't prove who did what), no clean offboarding (passwords live in tickets and chats), and permanent support load (clients keep coming back for "just one more reset"). The "fast" approach becomes the expensive one.

Signal 2: Resets are possible without a second channel

If a reset can happen based on a phone call or a forwarded email, it will. If you can't describe your reset flow in one paragraph, it's probably broken.

Signal 3: Forwarding exists without a paper trail

Forwarding rules aren't "convenience." They're persistence. If a forwarding rule exists and nobody can show the ticket that created it, assume it's risky. Forwarding is how ghost access survives staff turnover. Without centralized email management, you can't even audit what forwarding rules exist across 20 client domains — let alone know which ones were intentional.

Signal 4: Domain control is assumed, not verified

Teams assume registrar and DNS control because "we set it up years ago." Then you check and find the registrar is in a founder's personal email, renewal notices go to someone who left, and DNS admin is a freelancer. If you lose domain control, you lose email. Full stop.

Signal 5: No DNS rollback baseline

Here's the operator rule:

# The cost of DNS mistakes:

MX misconfiguration → inbound mail stops

SPF misconfiguration → outbound mail gets rejected

DKIM misconfiguration → alignment breaks

DMARC misconfiguration → can silently block real mailDNS isn't set-and-forget. It's the control surface. If you don't have known-good values written down, you don't have a rollback — you have downtime. Proper centralized email management means you can restore a domain's DNS baseline from documentation, not memory.

The Control Model Fix

The control model fix is a minimum viable system that separates ownership from access, makes reset and recovery provable, and governs persistence features like forwarding and catch-all. Centralized email management done right means you can answer four questions without a phone tree: who owns what, who can change what, what changed last, and how do you undo it.

This isn't more process. It's less chaos.

| Control area | Old way (what breaks) | New way (operator model) |

|---|---|---|

| Mailbox ownership | Whoever inherited the account | Named owner per mailbox, documented and current |

| Admin access | Shared credentials in a doc | Role-based, auditable, no shared secrets |

| Password resets | Helpdesk can reset on verbal request | User-driven by default; helpdesk resets require authorization |

| Recovery endpoints | Whatever email was on file years ago | Monitored, audited, rotated on a schedule |

| Forwarding rules | Added ad hoc, never removed | Off by default; time-bounded and logged when enabled |

| DNS baseline | Nobody has written it down | Known-good values documented per domain |

| Catch-all routing | Always-on, no audit trail | Off by default; enabled only with documented purpose and owner |

| Onboarding | Admin sets password and sends it in Slack | User-driven invite; mailbox owner sets their own credentials |

Five hard rules that make this work:

- A mailbox has an owner. Always. Owner means a named person or a role mailbox with assigned people — not "the agency."

- Admins manage provisioning, not secrets. Admins can create and suspend access. They shouldn't know the lasting password.

- Resets are user-driven by default. Helpdesk resets are the exception, not the rule.

- Recovery endpoints are production assets. Audit them quarterly. Rotate recovery codes after use.

- Persistence features are governed. Forwarding and catch-all off by default; time-bounded and logged when enabled.

This is where the email platform you're running either helps or fights you. Per-seat suites aren't designed for portfolio management — every new domain spawns more admin accounts, more billing seats, more places for ownership to drift. Centralized email management at scale requires infrastructure built around it, not bolted onto it.

TrekMail uses invite-based provisioning: you send a one-time setup link, the mailbox owner picks their local-part, sets their own password, and receives a one-time recovery code. The admin never touches a password. No shared secrets means no credential leak from tickets, no support debt from forgotten logins, no offboarding cleanup that takes three weeks.

For agencies, pooled storage and domain-based billing means you're not paying a per-user tax while maintaining centralized email management across a client portfolio. Bulk mailbox provisioning works without turning your admin dashboard into a credential repository.

Running email for multiple clients? You need one control plane, not fifteen admin panels.

TrekMail gives agencies invite-based provisioning, pooled storage, per-domain DNS tooling, and domain-based billing that doesn't punish you for growing. No shared secrets. No per-user tax. One place to answer the ownership question — in ten seconds, not three days.

Agency plan handles 1,000+ domains at $23.25/month. Starter plan begins at $3.50/month for up to 50 domains.

Compare plans → | Start a 14-day free trial (credit card required)

Incident Runbook: What to Do When It Breaks

This is the section teams skip. They improvise instead. Don't. A runbook for centralized email management failures is the short, strict set of steps you execute when adrenaline is high and everyone wants shortcuts. It prevents creative fixes, forces evidence collection, and reduces time-to-restore without creating new security debt.

A) Stabilize (first 15 minutes)

Freeze changes. Stop live edits to DNS, routing, and forwarding until you have a plan. Three people "trying fixes" in parallel is how you triple your downtime.

Define blast radius. List all affected domains and mailboxes. If you can't list them, you're not ready to touch anything. This is one of the core payoffs of centralized email management — you have a canonical, current list. Without it, you're guessing at scope while the incident grows.

Kill obvious persistence paths. Temporarily disable external forwarding and catch-all routing if you can do it without breaking critical workflows. Document why if you can't.

B) Prove ownership before you reset anything

Identify the mailbox owner. Identify who can approve resets. Verify domain control at the registrar and DNS provider right now — not "we set it up years ago."

You don't own a domain because you paid for it once. You own it because you can log in today.

C) Reset safely

Preferred path: user-driven reset via a verified out-of-band channel. If an operator must reset:

# Safe reset protocol

1. Generate a unique, random, one-time temporary credential

2. Force password change at first login

3. Notify mailbox owner via out-of-band channel (not email to the affected domain)

4. Log: who authorized, who executed, timestamp

# Never:

- Email a plaintext password

- Paste credentials into a ticket comment

- Execute a verbal helpdesk reset without documented authorizationD) Remove persistence

This is where incidents hide long after you think they're over. Audit every persistence layer before you declare the incident closed:

- Forwarding rules across all affected domains

- External aliases

- Delegated access and shared mailbox permissions

- App passwords and legacy authentication tokens

- OAuth connections and long-lived API tokens

If you skip this step, you've reset the front door while the back door stays open. The incident isn't over.

E) Restore and document

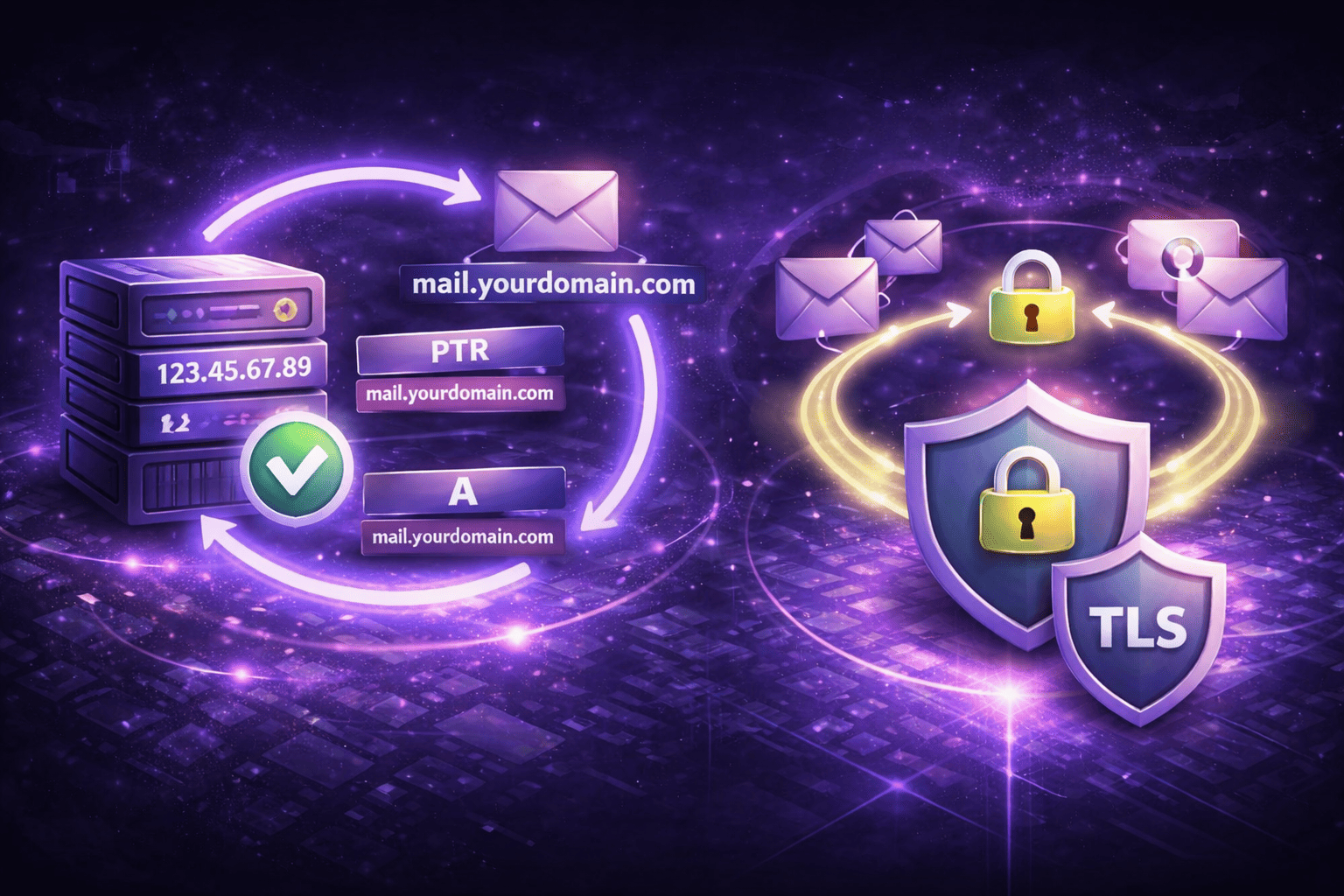

Restore known-good DNS values from your recorded baseline. TrekMail's required DNS records guide covers the exact records per domain if you need a reference point.

# DNS baseline to verify after incident

MX: [your provider's MX record and priority]

SPF: "v=spf1 include:yourmailprovider.com ~all"

DKIM: [selector]._domainkey TXT [your public DKIM key]

DMARC: _dmarc TXT "v=DMARC1; p=quarantine; rua=mailto:dmarc@yourdomain.com"Confirm mail flow in both directions. Then write down what changed, when, who changed it, and what fixed it. That documentation becomes the foundation of your centralized email management baseline for next time — and there will be a next time.

For SPF specifics, RFC 7208 is the authoritative reference for what each SPF qualifier actually does. Worth keeping bookmarked when someone breaks the SPF record in a hurry and you need to know what "~all" vs "-all" means for deliverability.

Why Centralized Email Management Fails Without the Right Infrastructure

The control model above works in a spreadsheet for three domains. At thirty domains it falls apart unless your email platform is built for it. Most per-seat suites aren't — they're designed around individual users, not domain portfolios. Every new client means more admin accounts, more billing seats, more places for ownership to drift. The platform fights your process.

Multi-domain email hosting on a pooled storage model removes the per-seat punishment while keeping a single control plane. That's the infrastructure side of centralized email management — one place to provision, one place to audit, one place to offboard.

For client offboarding — the single most common trigger for agency email incidents — mailbox suspension at the domain level means you're not chasing down individual accounts across five platforms. For client email management at any real volume, that's the difference between a clean handoff and a three-week cleanup.

Google Postmaster Tools can surface domain reputation and authentication pass rates across a client portfolio — a useful early signal that something in your centralized email management stack is misconfigured before it becomes an incident.

The Pre-Incident Audit You Can Run Right Now

You don't need a full security engagement. Run four checks today:

- List every active admin account across every domain. If you can't do this in five minutes, ownership is already drifting.

- Verify domain control at the registrar. Can you log in today? Are renewal notices going to an active address?

- Pull a full list of forwarding rules. Every rule should have a named purpose and a named owner. Anything that doesn't is a finding.

- Check recovery endpoints. Are they active? Do they go to monitored inboxes? When were they last reviewed?

Centralized email management means you have clean answers to all four before anyone asks. If any check surfaces something unrecognized, treat it as a live finding — not a "we'll get to it" item.

Conclusion

The incident question is always the same: who owns this mailbox, and who can reset it? If you can't answer that in ten seconds, you don't have centralized email management — you have a shared login and a hope that nothing goes wrong.

The fix isn't complicated. It's a decision to treat email infrastructure like infrastructure. Named owners. Explicit reset authority. Audited recovery endpoints. No shared secrets. Forwarding rules with a paper trail. DNS baselines you can actually restore from.

TrekMail is built for the agencies that have made that decision. Domain-based billing instead of per-seat punishment. One dashboard instead of fifteen admin panels. Invite-based onboarding so admins never touch a password. Pooled storage that doesn't scale your costs with headcount.

Compare plans — the Agency plan handles 1,000+ domains at $23.25/month. The Starter plan covers 50 domains at $3.50/month. Or start a 14-day free trial and run your first domain portfolio with a real control plane under it.

The incident is coming. The only question is whether you answer the ownership question in ten seconds or three days.